What SOA Taught Us About AI Agent Interoperability

Agent and MCP Interoperability: Governance at Scale

Recently, I was having a conversation with a Salesforce data and AI architect I respect. The question of whether “MCP-to-MCP integration” is a relevant topic during AI platform selection came up. My perspective was: Yes, this is the right time to discuss it, and the timing matters.

Architects need to understand whether their vendors are designing for the future, even when early projects don’t yet depend on agent-to-agent communication. For a blueprint, we don’t have to look far. The evolution of web services and Service-Oriented Architecture (SOA) offers a hard-won lesson in what it actually takes to govern interoperability at scale.

That conversation is what pushed me to write this article. I hope it serves you well.

Agent-to-agent Interoperability (and where MCP fits)

When people say agent-to-agent interoperability, they’re usually describing a simple idea: multiple autonomous components (agents, tools, and services) can communicate and coordinate to complete a task—without being hard-wired together one integration at a time. This matters because most real-world outcomes require more than one capability. A single agent might be great at conversation, but it still needs to:

- Retrieve or verify information from enterprise systems

- Call tools or services to take action

- Hand off work across functions (and sometimes across organizations)

There are multiple ways this interoperability can be implemented: APIs, events, queues, and emerging agent protocols. Model Context Protocol (MCP) is one emerging approach that aims to standardize how an agent runtime discovers and uses tools and context providers. It may become a standard, a partial standard, or simply one influence, but it’s worth understanding because it points to the direction of travel.

Thinking about Agentic Interoperability Today

Today, most agentic implementations are happening within individual departments or functions. However, most organizations will not succeed with a single, monolithic “do-everything” LLM or model.

Over time, organizations will need interdepartmental interoperability, as well as the ability to communicate with trusted, designated suppliers, partners, and customers. This mirrors the evolution from purpose-specific business applications to customer portals that unified information across systems.)

As agentic AI capability, and our understanding of what we can achieve, expands, the winning pattern looks much more like modern software architecture:

- Specialized agents that are optimized for a narrow domain (service triage, pricing guidance, fraud review, knowledge retrieval, workflow execution)

- Abstraction layers that hide underlying system complexity (data sources, policies, tools, models) so teams can iterate without rebuilding everything

- Interoperability so agents and tools can work together across products, teams, and even vendors without fragile, point-to-point custom wiring

As architects, we must help our organizations think beyond “prompt engineering” and design the abstractions that guide both data fitness-for-purpose and interoperability.

Today, many of these conversations are about Model Context Protocol (MCP). While we don’t and can’t know whether MCP will become the standard, a standard, or simply one influence on how this space evolves. But the way it extends familiar integration concepts is a valuable learning and planning opportunity.

At a practical level, MCP aims to provide a consistent way for an agent (or agent runtime) to discover and use tools and capabilities exposed by another system, while making it easier to manage contracts, policy, and change over time.

- A consistent way to connect specialized capabilities

- A contract model that can evolve

- A governance and observability model that can scale

If you’re an architect planning an AI-enabled solution architecture, understanding what MCPs are—and how MCP-to-MCP interoperability might work—is essential for making investment decisions that won’t paint you into a corner. If you don’t plan interoperability, you risk building point to point solutions you will need to rebuild.

Where is My Order: A Tried & True Example to Ground Ourselves

Let’s take a familiar example we all lived through as consumers, and perhaps as architects. Think about a typical eCommerce purchase. Behind the scenes, there are multiple specialized applications working together:

- An eCommerce storefront (where you place the order)

- An order management system (where the order is routed and fulfilled)

- A customer support system (where issues are handled)

- One or more shipping providers (FedEx, UPS, DHL, regional carriers)

Over the past three decades, we stitched these together with increasingly advanced integration technologies, yet the customer experience remains incomplete. A customer reports a shipping exception: “My order shows delivered, but I don’t have it.”

Approach with web services or APIs

In many customer portals, some information is copied into the company’s systems (for example, order number, items, address, payment status, case history). Other information is often a federated lookup, especially shipment tracking. That’s why so many portals show a tracking status and then link you out to a carrier site like FedEx for the details. This works fine, until it doesn’t.

When there’s an issue, the customer gets stuck between systems: the portal says “delivered,” the carrier page might show something ambiguous, support can’t immediately see the full chain of evidence, and the warehouse or logistics team is working in a separate queue. Everyone has some information, but not the same information, and not always at the same moment.

Enhancing outcomes with agents: Agents can make this experience meaningfully better by doing what customers and support reps shouldn’t have to do: gather evidence across systems and keep the right people in sync.

Instead of asking the customer to click around (or asking a rep to swivel-chair between tools), an agent can:

- Identify the shipping provider(s) for the order

- Pull the right tracking events and proof-of-delivery signals

- Compare what the storefront shows vs. what the carrier shows vs. what the OMS shows

- Surface what changed and what action is appropriate

- Notify or route to the right stakeholders (support, logistics, fraud, refunds) with the evidence attached

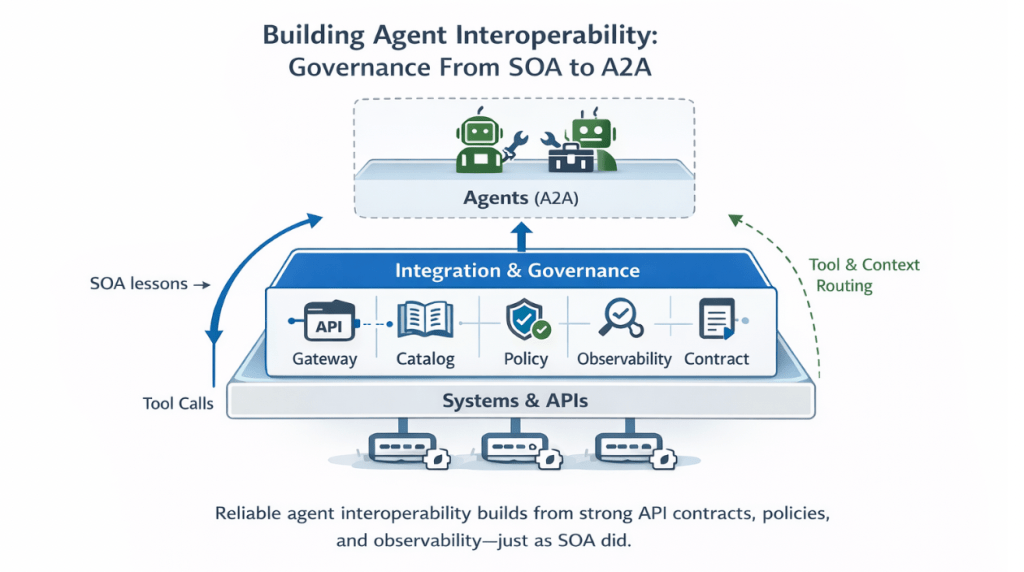

The goal isn’t to replace APIs. It’s to improve outcomes by using the infrastructure we already have—while applying the hard lessons we learned the first time around: discoverability, contracts, security, observability, resilience, and governance.

Services Oriented Architecture (SOA): A Clear Lens for MCP

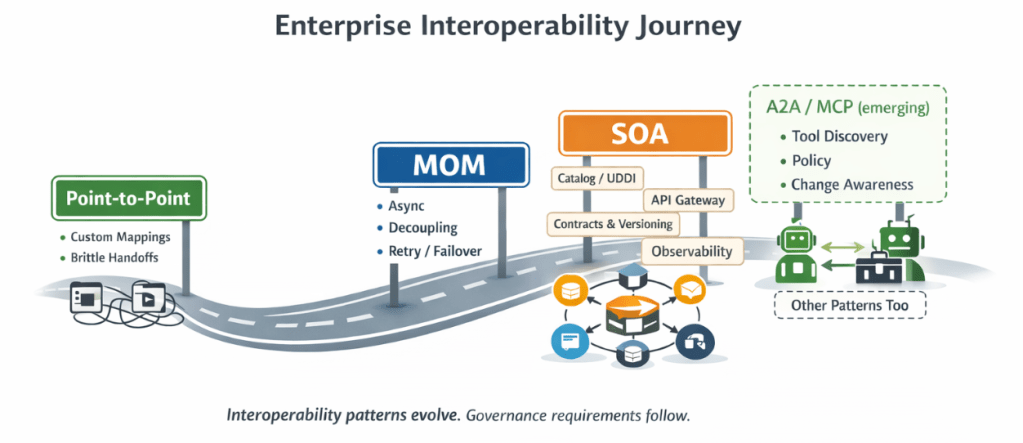

When I think about A2A or MCP-to-MCP integration needs, I immediately think about the evolution of SOA architecture and solutions. After all, we’ve been here before. As enterprises matured, we moved from point-to-point integration to service-oriented patterns and message-oriented middleware.

Along the way, we learned a key lesson: scale and reuse require governance, and governance requires honesty about its limits and overhead.

The goal of this article is not to debate whether SOA was a success or failure, but it is important to recognize SOA benefits took longer to realize, as teams underestimated the operational needs and infrastructure required to make service reuse real. MCP integration will have similar dynamics. The details are new, but the underlying question is familiar.

What Robust SOA Delivered (and What to Look for Again)

SOA was not just “we have APIs.” Mature SOA programs delivered repeatable capabilities that helped large organizations scale integration without reinventing controls every time. Here are the capabilities I look for as a baseline, and why they matter in an agent-to-agent future. Remember: MuleSoft has been here before too:

| SOA capability (then) |

Why it mattered (then) |

Why it matters for agents & MCP (now) |

|---|---|---|

| Service catalog & discoverability (UDDI-era concepts, portals, catalogs) | Teams could find and reuse services instead of building duplicates. | Agent ecosystems need a reliable way to know what tools exist, what they do, and when they’re safe to call. |

| Contracting & versioning (schemas, error models, lifecycle policy) | Breaking changes quickly destroyed trust in shared services. | MCP tools and agent behaviors will evolve quickly; without stable contracts and deprecation policy, you get brittle behaviors and silent regressions. |

| Security & policy enforcement (authN/authZ, centralized policies, audit) | Services were only reusable if security was standardized. | Agent-to-agent calls often represent delegated action; policy must be consistent, testable, and auditable. |

| Observability & traceability (tracing, correlation IDs, logs, SLOs) | Without visibility, you couldn’t debug or improve reliability. | Agent interactions can be harder to reason about; observability needs to be better, not weaker, across chains of tool calls. |

| Reliability & resilience patterns (timeouts, retries, circuit breakers, idempotency) | Distributed systems fail in predictable ways. | Agent chains can amplify failure modes; resilience patterns reduce cascading failures and unsafe retries. |

| Governance & change management (standards, ownership, review, testing) | Reuse at scale demanded consistency. | MCP ecosystems will need governance that scales with adoption—without turning into a delivery bottleneck. |

Chart generated using ChatGPT.

MuleSoft’s Anypoint Platform has historically helped teams operationalize these capabilities through API-led connectivity, API management, policy enforcement, monitoring, and lifecycle tooling. The exact tool matters less than the capability model.

MCPs are Like SOA APIs, With More Moving Parts

A traditional API call can be “deterministic” in intent, but the results are still shaped by forces outside the calling application’s control.

Even before agents, architects learned to ask:

- Could a security policy change alter what data is returned?

- Could archived records, backfills, or bulk updates change the shape of the response?

- Could a downstream system version update change behavior even if the endpoint didn’t change?

We built governance and observability because we accepted a reality: In distributed systems, stability is something you design for—not something you assume.

MCP-to-MCP communication adds additional layers of variability:

- Model changes (behavior shifts even when the “interface” looks the same)

- Prompt or instruction changes (policy shifts, guardrail updates, role changes)

- Data drift and data observability gaps (the same long-standing issue, now amplified)

This is the part many people feel as “uncertainty,” and it’s a valid concern. But it’s also a clue.

If agent-to-agent is in your future, your architecture questions need to evolve from:

- “What endpoint do I call?”

to

- “What changed since yesterday that could change the meaning, safety, or correctness of this call—and how will I know?”

Questions to ask vendors about an agent-to-agent future

You don’t need all the answers today. But you do need to know whether your vendors have a credible plan.

Here are starter questions I recommend:

- Discoverability: How do we catalog MCP tools/agents and make safe usage discoverable across teams?

- Contracting: What is the contract model (inputs, outputs, errors), and how do you manage versioning and deprecation?

- Policy: How are auth, permissions, and data access policies defined and enforced consistently across agents?

- Observability: Can we trace an end-to-end agent interaction (including tool calls) with correlation IDs and audit logs?

- Change awareness: How do you alert us to model/prompt/tool changes that could alter outcomes?

- Resilience: What patterns exist for retries, timeouts, fallbacks, and safe failure modes?

- Testing: How do we test agent-to-agent workflows in a way that’s repeatable and meaningful?

- Governance: What operating model do you recommend so governance scales without becoming a blocker?

Where to Look for these Capabilities in the Salesforce Portfolio

MuleSoft

If you’re evaluating MCP-to-MCP or agent-to-agent interoperability in the Salesforce ecosystem, it’s not surprising that this conversation is coming from MuleSoft.

MuleSoft has long been strong in API gateways, API management, and interoperability—exactly the foundation you need when agents must reliably access and act on information across the enterprise. Agents don’t replace APIs; they depend on them.

That said, I have not personally evaluated Salesforce’s MCP capabilities end-to-end.

Informatica

Informatica’s long-standing strengths in data cataloging, metadata management, and lineage can become real assets as agent ecosystems scale. These capabilities are especially valuable when you need to explain where information came from, how it was transformed, and what downstream decisions it influenced. In practice, strong catalog + lineage capabilities can complement what I’d expect MuleSoft to provide around access control, orchestration, and resilience.

Agentforce + Data 360

Any Salesforce portfolio view would feel incomplete without Agentforce. One of Agentforce’s strengths is its ability to take deterministic actions through Flow and guided automation, while also drawing on contextual first-party and third-party insights via Data 360 capabilities (for example, governed data access patterns that help agents reason with better context).

This foundation is essential, because the point of interoperability isn’t just “agents talking to agents.” It’s enabling agents that can reliably act, coordinate, and deliver outcomes across systems.

Conclusion

The conversation that sparked this article was simple: is MCP-to-MCP interoperability worth discussing now? The answer is yes, not because the standards are settled, but because the architectural questions are familiar. We’ve navigated this before with SOA, and the lessons are directly applicable. Discoverability, contracting, security, observability, resilience, and governance weren’t nice-to-haves then, and they won’t be now. The organizations that invest in the right integration foundation today (rather than wiring point-to-point solutions they’ll eventually need to rebuild) are the ones that will scale cleanly as agent ecosystems mature. The tools are newer. The stakes feel higher. But the blueprint already exists.

If you have, I’d genuinely love to learn from your experience. Drop a comment, or tag me when you publish your findings, and share what you found most compelling, what felt immature, and what you’re watching next.

Resources

If you want to read more, here are a few articles to help you along the way. I like multi-sourcing my information.

What is MCP, ACP, and A2A – Dell Boomi Article

MuleSoft MCP server documentation

Introducing Model Context Protocol – Anthropic

May 2025 article on Informatica adding support for agentic interoperability

Explore related content:

Salesforce Spring ’26 Is Dropping Unverified Emails

Einstein Activity Capture: The Guide to Salesforce Email and Calendar Sync